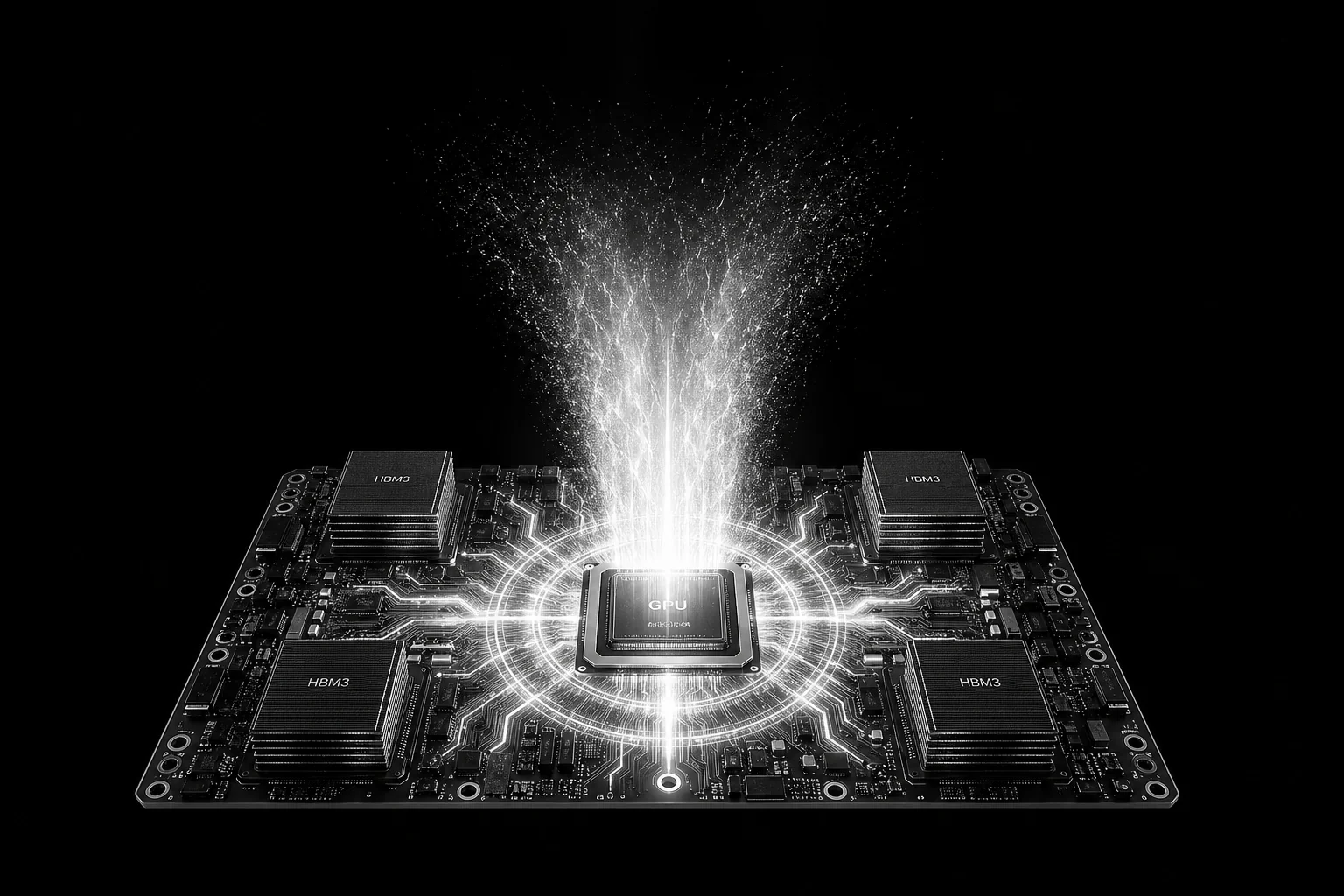

Your GPUs are fast. Your infrastructure isn’t.

The performance you’re leaving on the table isn’t in your model. It’s in your stack.

Platform

More from every GPU you already run.

Your models and serving pipelines stay as they are. Same weights, APIs, schedulers, and containers. Nothing upstream has to move.

Deep Variance is the runtime layer underneath: it recovers wasted memory, cuts repeat work on serving, and runs leaner on the chip.

Optimized in production

Install once.

Run more for less.

+65%

More memory

Fit bigger models, or serve more users at the same time.

6x

Inference speed

Real-time tuning unlocks more tokens and more requests per GPU.

-50%

Energy efficiency

Cut power draw per token. Lower cost, smaller fleet, same SLA.

Headline figures are upper-bound.Tested on supercomputing infra with 600× NVIDIA H100s.

Works with what you already run.

No new framework. No new scheduler. No code changes.

Frameworks

Silicon, shipping today